-

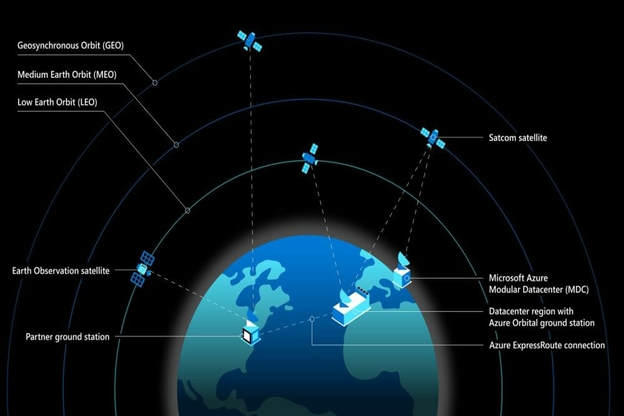

Azure Space in its Entirety

DATE:The space community is quickly expanding and innovation is decreasing the hurdles to entry for both public and private-sector organisations. […]

-

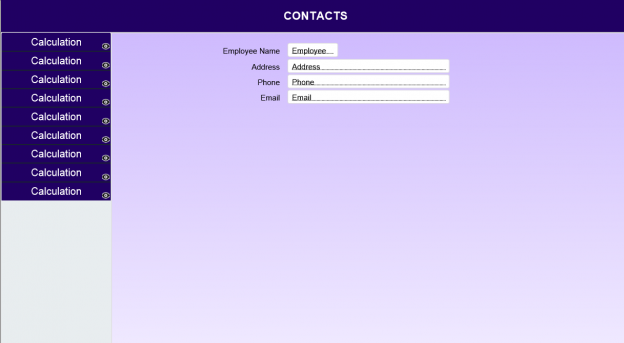

Guide to Avoid Navigation Rework in FileMaker

DATE:Did you ever encounter the turmoil of adding a new module on all layouts post creation of standard menu navigation? […]

-

A Developer’s Tale: Using Microsoft technologies to integrate Myzone device with Genavix application

DATE:It is no secret that digital technology is slowly shaping the future of the healthcare industry. The use of fitness […]

-

Barcode Scanning for a web based application

DATE:In this article I will share some information about a recent barcode scanning implementation we did for a web based […]

-

Device and Browser Testing Strategies

DATE:Testing without proper planning can cause major problems for an app release, as it can result in compromised software quality […]

-

Web API security using JSON web tokens

DATE:Today data security during financial transactions is super important and critical. The protection of sensitive user data should be a […]

-

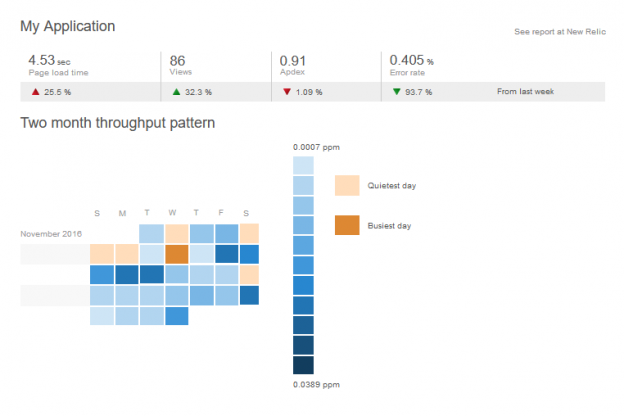

Improve Performance of Web Applications

DATE:We all know how frustrating it is to see the progress spinning wheel going on and on while navigating through […]

-

How MetaSys handled performance Issues related to Entity Framework

DATE:In building web applications for clients, two important factors we at MetaSys focus on are performance, and speed of development. […]

-

How I cracked my MCSA Web Applications certification Exam

DATE:It has been a couple of months since I gained MCSA Certification Exam in Web Applications, and in this article, […]

-

InBody Integration for biometric and blood pressure data into a web application

DATE:People today are more health-conscious than ever before, and digital technology is playing an important role in this development. Thanks […]

-

React Native vs Native apps development

DATE:React Native has some exciting features that make it popular in the developer community. Many popular apps such as Instagram, […]