-

What is EWS?

DATE:Exchange Web Services is an Application Program Interface (API) by Microsoft that allows programmers to fetch Microsoft Exchange items including […]

-

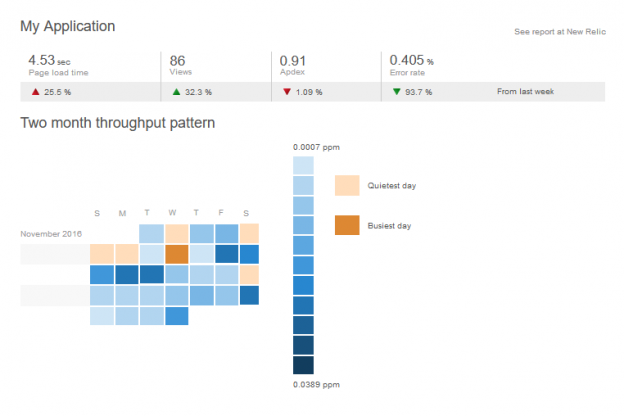

Improve Performance of Web Applications

DATE:We all know how frustrating it is to see the progress spinning wheel going on and on while navigating through […]

-

How MetaSys handled performance Issues related to Entity Framework

DATE:In building web applications for clients, two important factors we at MetaSys focus on are performance, and speed of development. […]

-

InBody Integration for biometric and blood pressure data into a web application

DATE:People today are more health-conscious than ever before, and digital technology is playing an important role in this development. Thanks […]

-

Using the NReco pdf writing tool

DATE:These days financial, marketing and e-commerce websites allow us to download reports and receipts in pdf form. The Pdf file […]

-

A Case Study – Building a Dashboard using Google charts in ASP.NET

DATE:Tracking KPIs, metrics and any other relevant data is important for any business looking to improve their performance, and proper […]

-

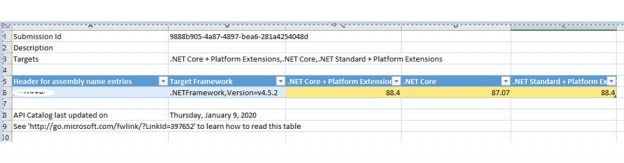

Converting an MVC web APP to .Net Core Web App

DATE:History Like many others, we have been working on MVC 5 based web applications since 2013. With Microsoft planning significant […]